ActiveMQ Artemis address model explained with examples in .NET

To make the best use of Apache ActiveMQ Artemis you need to understand the messaging model of the broker. In this article I will explain the rules and principles that govern the Artemis address model and how they may affect your applications. To make things easier to follow I’ve created several code examples using ArtemisNetClient. You can find them, as always, on GitHub.

.NET Client for ActiveMQ Artemis

ArtemisNetClient is a lightweight library built on top of AmqpNetLite. It tries to fully leverage Apache ActiveMQ Artemis capabilities. It has a built-in configurable auto-recovery mechanism, transactions, asynchronous API, and supports ActiveMQ Artemis management API.

This last feature will be especially important for the purpose of this blog post, as we won’t need to dig into the broker internals (broker.xml) or tinker around with the Artemis management console to demonstrate more sophisticated examples.

Addresses, queues, and routing types

The messaging model that powers Apache ActiveMQ Artemis seems to be pretty straightforward if you consider that it is built on top of just three simple concepts addresses, queues, and routing types. These are the bits that bind producers and consumers together and allow messages to flow from one application to another.

The overarching idea is that producers never send messages directly to queues. Actually, a producer is unaware whether a message will be delivered to any queue at all. Instead, the producer can only send a message to an address. The address represents a message endpoint. It receives messages from producers and pushes them to queues. The address knows exactly what to do with a message it receives. Should it be appended to a single or many queues? Or maybe should it be discarded? The rules for that are defined by the routing type.

There are two routing types available in Apache ActiveMQ Artemis: Anycast and Multicast. The address can be created with either or both routing types.

When the address is created with Anycast routing type all the messages send to this address are evenly distributed1 among all the queues attached to it. With Multicast routing type every queue bound to the address receives its very own copy of a message. Had the message been pushed to the queue, it can be picked up by one of the consumers attached to this particular queue.

That’s about it regarding the theory. Now let’s see how this works in practice.

Anycast Routing Type

Let’s consider a simple producer application.

Producer

using ActiveMQ.Artemis.Client;

var connectionFactory = new ConnectionFactory();

var endpoint = Endpoint.Create(host: "localhost", port: 5672, "artemis", "artemis");

var connection = await connectionFactory.CreateAsync(endpoint);

var address = "my-anycast-address";

await using var producer = await connection.CreateProducerAsync(address, RoutingType.Anycast);

Console.WriteLine("Producer created.");

Console.WriteLine($"Address: {address}");

Console.WriteLine($"RoutingType: {RoutingType.Anycast.ToString()}");

int counter = 1;

while (counter <= 10)

{

var msg = new Message($"Message {counter}");

await producer.SendAsync(msg);

Console.WriteLine($"Message '{counter}' sent.");

counter++;

await Task.Delay(TimeSpan.FromSeconds(1));

}And its counterpart consumer app:

Consumer

using ActiveMQ.Artemis.Client;

var connectionFactory = new ConnectionFactory();

var endpoint = Endpoint.Create(host: "localhost", port: 5672, "artemis", "artemis");

var connection = await connectionFactory.CreateAsync(endpoint);

var address = "my-anycast-address";

await using var consumer = await connection.CreateConsumerAsync(address, RoutingType.Anycast);

Console.WriteLine("Consumer created.");

Console.WriteLine($"Address: {address}");

Console.WriteLine($"RoutingType: {RoutingType.Anycast.ToString()}");

while (true)

{

var msg = await consumer.ReceiveAsync();

await consumer.AcceptAsync(msg);

Console.WriteLine($"Message '{msg.GetBody<string>()}' received.");

}If we run a single instance of a producer and two instances of a consumer application, we should get the following output:

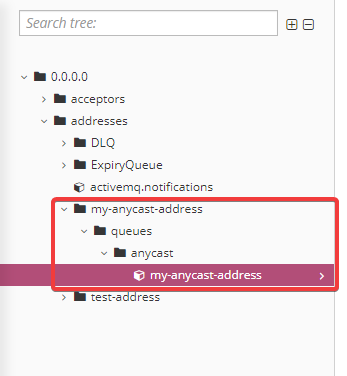

Analyzing the code snippets above you may wonder, where is the queue? It’s an excellent question because, indeed, it doesn’t seem to be one. Having a look into the Artemis Management Console reveals the mystery.

The broker created the queue on our behalf, and named it after the address. It is a small workaround that was introduced in ActiveMQ Artemis to simplify integration with the old JMS clients. In the JMS world you can actually send a message to a queue. It hardy ever makes sense to do so (we will see why soon), but it’s possible.

ArtemisNetClient allows you to explicitly attach to this queue. You can replace the line:

await using var consumer = await connection.CreateConsumerAsync(address, RoutingType.Anycast);with:

await using var consumer = await connection.CreateConsumerAsync(address: address, queue: address);and you will end up with almost exactly the same behavior.

There is a small, subtle difference, though. If you try to run the amended version of the consumer app before the producer is up and running, you will end up with an exception. It’s a consequence of how direct attach works. It utilizes a mechanism called FQQN2, which allows the client to specify address and queue directly, but with the caveat that the queue and the address already exist.

We can also create multiple queues under the same address using Anycast routing type. It can be done with the help of the ITopologyManager interface. This interface allows us to declare the queue before we try to attach to it.

To illustrate this I created a new version of the consumer app. Let’s call it Consumer2. It’s slightly more complex. It doesn’t have any hard-coded topology, and to run it you need to provide three parameters: address name, routing type, and queue name. It creates a connection to the broker, and with the help of the ITopologyManager interface, declares a queue under a given address using a specified routing type. With the queue defined, it then creates an instance of IConsumer and starts receiving messages in an infinite loop.

Consumer2

using ActiveMQ.Artemis.Client;

var address = args[0];

var queue = args[1];

if (!Enum.TryParse(args[2], out RoutingType routingType))

{

throw new ArgumentException($"{args[2]} is the invalid RoutingType");

}

var connectionFactory = new ConnectionFactory();

var endpoint = Endpoint.Create(host: "localhost", port: 5672, "artemis", "artemis");

var connection = await connectionFactory.CreateAsync(endpoint);

var topologyManager = await connection.CreateTopologyManagerAsync();

await topologyManager.DeclareQueueAsync(new QueueConfiguration

{

Address = address,

Name = queue,

RoutingType = RoutingType.Anycast,

AutoCreateAddress = true,

Durable = true,

});

await using var consumer = await connection.CreateConsumerAsync(address: address, queue: queue);

Console.WriteLine("Consumer created.");

Console.WriteLine($"Queue: {queue}");

Console.WriteLine($"RoutingType: {routingType}");

while (true)

{

var msg = await consumer.ReceiveAsync();

await consumer.AcceptAsync(msg);

Console.WriteLine($"Message '{msg.GetBody<string>()}' received.");

}If we run all our applications we should get the following output:

The producer is sending messages to my-address address then the messages are distributed among two queues: (1) q1 and (2) q2. Under each queue, there are two competing consumers that are receiving and processing messages.

As you can see, building topologies with multiple queues defined under the same address with anycast routing type doesn’t make much sense. Because of the round-robin distribution that is applied both to queues attached to the address and to consumers attached to the particular queues, the observable disposition of the messages would be almost exactly the same, as if we simply attached all consumers to a single queue.

Multiple queues defined under the same anycast address are an unusual and exotic configuration with negligible practical applicability. I dare to say that the same applies to anycast routing type in general. It’s good for demos, but I haven’t seen any production scenario where I would use it instead of multicast.

Multicast Routing Type

The anycast routing type is good for demos because it automatically creates durable queues. In the simplest scenario, you can run your producer and consumer apps without worrying about any preliminary configuration. It just works. With Multicast there may be some confusion at first.

Let’s consider slightly modified apps from the initial demo:

ProducerMulticast

using ActiveMQ.Artemis.Client;

var connectionFactory = new ConnectionFactory();

var endpoint = Endpoint.Create(host: "localhost", port: 5672, "artemis", "artemis");

var connection = await connectionFactory.CreateAsync(endpoint);

var address = "my-multicast-address";

await using var producer = await connection.CreateProducerAsync(address, RoutingType.Multicast);

Console.WriteLine("Producer created.");

Console.WriteLine($"Address: {address}");

Console.WriteLine($"RoutingType: {RoutingType.Multicast.ToString()}");

int counter = 1;

while (counter <= 10)

{

var msg = new Message($"Message {counter}");

await producer.SendAsync(msg);

Console.WriteLine($"Message '{counter}' sent.");

counter++;

await Task.Delay(TimeSpan.FromSeconds(1));

}ConsumerMulticast

using ActiveMQ.Artemis.Client;

var connectionFactory = new ConnectionFactory();

var endpoint = Endpoint.Create(host: "localhost", port: 5672, "artemis", "artemis");

var connection = await connectionFactory.CreateAsync(endpoint);

var address = "my-multicast-address";

await using var consumer = await connection.CreateConsumerAsync(address, RoutingType.Multicast);

Console.WriteLine("Consumer created.");

Console.WriteLine($"Address: {address}");

Console.WriteLine($"RoutingType: {RoutingType.Multicast.ToString()}");

while (true)

{

var msg = await consumer.ReceiveAsync();

await consumer.AcceptAsync(msg);

Console.WriteLine($"Message '{msg.GetBody<string>()}' received.");

}If you run the applications starting with consumers, you should get the following output:

Each instance of the consumer app is getting its very own copy of a message. It is a typical Pub/Sub scenario.

The things are getting a bit tricky when you run the consumer apps with a small delay (at least 1 second) after the producer. The output might be a bit unexpected. A few initial messages are lost! What happened?

To answer this question we need to understand how ActiveMQ Artemis treats multicast addresses in terms of queue creation. When you start sending messages to multicast address, no queue is created automatically. The lack of the queue means that there is no place where the messages can be persisted. If there is no such a place, all messages are simply discarded.

When you attach the first consumer to the address, the broker creates a volatile queue with a randomly generated name.

The fact that the queue is volatile simply means it will be deleted as soon as your consumer app disconnects from the broker. As you can imagine, with the queue destroyed, all unprocessed messages are gone as well.

The same happens in case of a network blip. If you lose a connection to the broker ArtemisNetClient automatically reconnects, and your consumer will be re-attached to the same address but a different queue. This means that there is a high chance that there are some messages that your application may have missed.

To guarantee that no messages are lost, we need to create a durable queue under a multicast address. Our Consumer2 app can do exactly that.

We can start and stop the consumer app, and no message will be lost. The queue won’t be destroyed until we explicitly remove it.

This can lead to a problem of its own. In contrast to volatile queues, no message will be removed from the durable queue until consumed. If your consumer is down for whatever reason, and producer keeps pushing new messages, they will keep building up on the queue, and eventually take the broker down with OutOfMemoryException3.

If a single instance of our consumer app won’t be able to catch up with the rate of the incoming messages, we can easily scale it out by attaching additional consumers to the same queue. As a result, we will end up with a very similar configuration that we started with the initial demo:

The true power of multicast routing type reveals itself when we start creating multiple shared, durable queues under the same address. The typical approach is to create a single queue per use case (in the microservices world, it would be per microservice). With each new queue, we are creating a separate, independent stream of messages. That’s something that isn’t possible with anycast routing type.

Anycast & Multicast Routing Type

Anycast & multicast routing type configuration is something I’m including here just for the sake of completeness. Knowing the power of the multicast it’s pretty difficult to find a reasonable example when this kind of setup would be justified.

While not typically recommended, you can define an address with both routing types. The behavior of the broker in this scenario is pretty interesting, as the message sent to it may take one of the 3 routes.

1) When you send a message using producer attached with anycast routing type it will be handled as if the address was anycast. Messages will be distributed among all the consumers attached using anycast routing type. If you happen to have some multicast queues defined under this address, they will be excluded from processing.

2) When you send a message using producer attached with multicast routing type it will be handled as if the address was multicast. Each multicast queue will get its copy of the message. If you happen to have some anycast queues defined under this address, they will be ignored.

3) When you send a message using producer attached without any routing type, the broker would behave as if two messages were sent. One with anycast routing type and the other with multicast routing type, so the end results will be the combination of variant (1) and (2).

Summary

In this blog post, you learned how Apache ActiveMQ Artemis address model works. I presented how different aspects of it may be used to shape the behavior of your applications. If any points are not clear, or you have any questions in general, feel free to leave a comment below!

Footnotes:

Subscribe to Havret on Software

Get the latest posts delivered right to your inbox